From Playtest Notes to Narrative Analytics: What to Measure (and Ignore) in Your Early Questas Builds

Early builds are where your interactive stories learn who they are.

When you’re just getting started with Questas, it’s tempting to obsess over dashboards and funnels, or to collect every scrap of feedback from every playtester. But if you treat your very first prototypes like a full‑blown product launch, you’ll drown in noise and miss the handful of signals that actually matter.

This post is about focusing your attention where it counts: turning messy playtest notes into narrative analytics that help you decide what to cut, what to double down on, and what to ignore—for now.

Why early measurement matters (and why it’s different from “growth analytics”)

Early Questas builds are not about scale. They’re about finding the heartbeat of your story:

- Which choices feel meaningful?

- Where do people lean in, slow down, or replay?

- Which scenes are doing heavy lifting—and which are just set dressing?

If you skip measurement at this stage, you risk:

- Polishing the wrong branches. You’ll spend hours generating AI visuals and micro‑videos for paths no one actually cares about.

- Over‑complicating your structure. You’ll keep adding branches instead of tightening the few that deliver the biggest emotional or learning payoff.

- Misreading enthusiasm. A handful of vocal friends saying “this is cool” is not the same as people choosing to replay or share.

But the flip side is just as dangerous: over‑measuring.

If you try to track everything from day one—completion rates, click‑throughs, heatmaps, device breakdowns—you’ll:

- Burn time instrumenting instead of building.

- Make decisions based on tiny sample sizes.

- Lose the qualitative texture that makes early playtests so valuable.

The goal at this stage is narrative analytics, not growth analytics: data that helps you understand how your story behaves, not how your funnel performs.

Start with a question, not a dashboard

Before you run a single playtest, write down 1–3 questions you want this build to answer. For example:

- “Do players understand what’s at stake by the second scene?”

- “Which of these three character archetypes do people naturally gravitate toward?”

- “Is this branching depth (three layers) too much for a 5‑minute experience?”

Once you have those questions, you can decide what to measure right now and what to postpone.

A simple template to frame your early Questas tests

For each build, jot down:

- Purpose of this build

e.g., “Test whether the ‘mentor betrayal’ twist lands emotionally.” - Who you’re testing with

e.g., “Three colleagues who match our target learner profile.” - One primary behavior to watch

e.g., “Do they replay the branch where they trust the mentor?” - Two or three questions to ask afterward

e.g., “Where did you feel most tension?” “Which choice felt fake or ‘obvious’?”

Everything you measure should tie back to that purpose. If it doesn’t, it’s a distraction.

The three metrics that matter most in early builds

You don’t need 20 metrics. You need three that tell you whether the story is working at all.

1. Path completion (per branch, not overall)

Instead of obsessing over “overall completion rate,” focus on whether people finish the specific paths you care about.

Ask:

- When players choose Branch A vs. Branch B, which one do they actually finish?

- Where along each branch do they drop?

- Do they drop because they’re confused, bored, or satisfied early?

In Questas, you can map this directly onto your visual editor: highlight the scenes that represent your critical branches and note where testers naturally stop.

What to do with this:

- If a key branch has low completion and people can’t explain why, that’s a pacing or clarity issue.

- If they stop because they feel they’ve already reached a satisfying resolution, you might have accidentally written an early “true ending.” Either embrace it or move that payoff deeper into the branch.

2. Choice friction (where players hesitate, not just what they click)

The moment before a click is where your narrative is doing its real work.

Track:

- Where players pause before making a choice.

- Which choices they ask clarifying questions about (“Wait, what does ‘confront’ mean here?”).

- Which options they ignore instantly because the outcome feels too obvious or irrelevant.

You can capture this with:

- Screen recordings (with consent).

- A simple timer during live tests: “You hesitated for ~10 seconds here—what was going through your head?”

- Quick post‑choice questions: “On a scale of 1–5, how tough was that decision?”

This is where posts like The Minimal Viable Quest: Tiny, Three-Choice Questas Formats That Still Deliver Big Insight become powerful. A tiny build with just three meaningful choices can give you far more insight into choice friction than a sprawling saga.

What to do with this:

- No friction at any choice? Your decisions might be too binary or morally obvious.

- Chronic confusion at a specific node? Your copy, stakes, or visuals aren’t aligned.

- Long, thoughtful pauses at the same scene across testers? That’s a keeper. Build around it.

3. Replay intent (not just actual replays)

In tiny cohorts, raw replay numbers are misleading. You might only have 5–10 testers.

Instead, look for replay intent:

- Do they say, unprompted, “I want to go back and see what happens if I choose X”?

- Do they ask if there are “secret” paths or alternate endings?

- When you offer them the chance to replay one branch, do they take it?

If people finish a build and simply say, “Cool, thanks,” with no curiosity about alternatives, your branching either isn’t visible enough—or the consequences feel too minor.

What to do with this:

- If replay intent is low, consider sharper divergences at key moments. Posts like Micro-Video, Macro Impact: Using AI-Generated Video Moments to Punctuate Key Choices in Your Questas can help you visually signal that “this choice really matters.”

What to capture from playtest conversations

Numbers alone can’t tell you why a scene works. That’s where structured notes come in.

During or right after each session, capture:

-

Exact phrases people use

- “I didn’t realize that choice would lock me out of the lab.”

- “I thought the mentor was on my side until this moment.”

These lines are gold. They reveal mental models and expectations.

-

Moments of visible emotion

- Laughter, sighs, leaning closer to the screen, or checking their phone.

- Mark the scene ID in your Questas build each time you see a reaction.

-

Navigation questions

- “Can I go back?”

- “Wait, how do I see the other outcome?”

These point to UX friction or unclear affordances.

-

Unprompted comparisons

- “This feels like a training sim.”

- “This reminds me of a visual novel.”

These help you understand how players are categorizing your experience.

Then, translate those notes into lightweight narrative analytics:

- Create a simple spreadsheet or doc with columns like:

Scene ID,Emotion,Quote,Confusion? (Y/N),Replay Interest? (Y/N). - After 5–10 testers, patterns will start to emerge.

This is the same mindset that powers posts like Storyboard to Screen: Using AI-Generated Micro-Video to Pace Tension and Reveal in Your Questas—you’re tracking not just what happens, but how it feels in the moment.

The analytics you can safely ignore (for now)

Some metrics are useful later, but mostly noise in your first few builds.

1. Device breakdowns and browser quirks

Unless your story relies on very specific interactions (e.g., mobile‑only gestures), early on you don’t need a full compatibility matrix.

- If something is obviously broken on a major device, fix it.

- Otherwise, focus on story performance, not pixel‑perfect layout.

2. Deep demographic segmentation

At 10–30 testers, slicing your data by age, role, or region rarely tells you anything reliable.

- Instead, group people by experience with the subject matter or familiarity with interactive stories.

e.g., “First‑time scenario learners” vs. “Experienced role‑players.”

3. Micro‑conversion funnels

Tracking every click as a separate funnel stage makes sense when you’re optimizing for thousands of users. In early Questas builds, it’s overkill.

- You don’t need a chart for “Started Scene 3 → Completed Scene 3 → Clicked Choice 3B.”

- You do need to know “Everyone drops in Scene 3 because the exposition drags.”

4. Time‑on‑page as a primary success metric

Time spent isn’t automatically good or bad.

- A long dwell time could mean deep engagement—or confusion.

- A short dwell time could mean boredom—or that the scene is punchy and effective.

Use time as a supporting signal, not a decision‑maker. Pair it with your playtest notes: “Long dwell + puzzled face” vs. “Long dwell + leaning forward and smiling.”

Turning messy feedback into concrete iteration plans

Once you have a few sessions’ worth of notes and basic metrics, it’s easy to get overwhelmed. Here’s a simple way to turn that chaos into a roadmap.

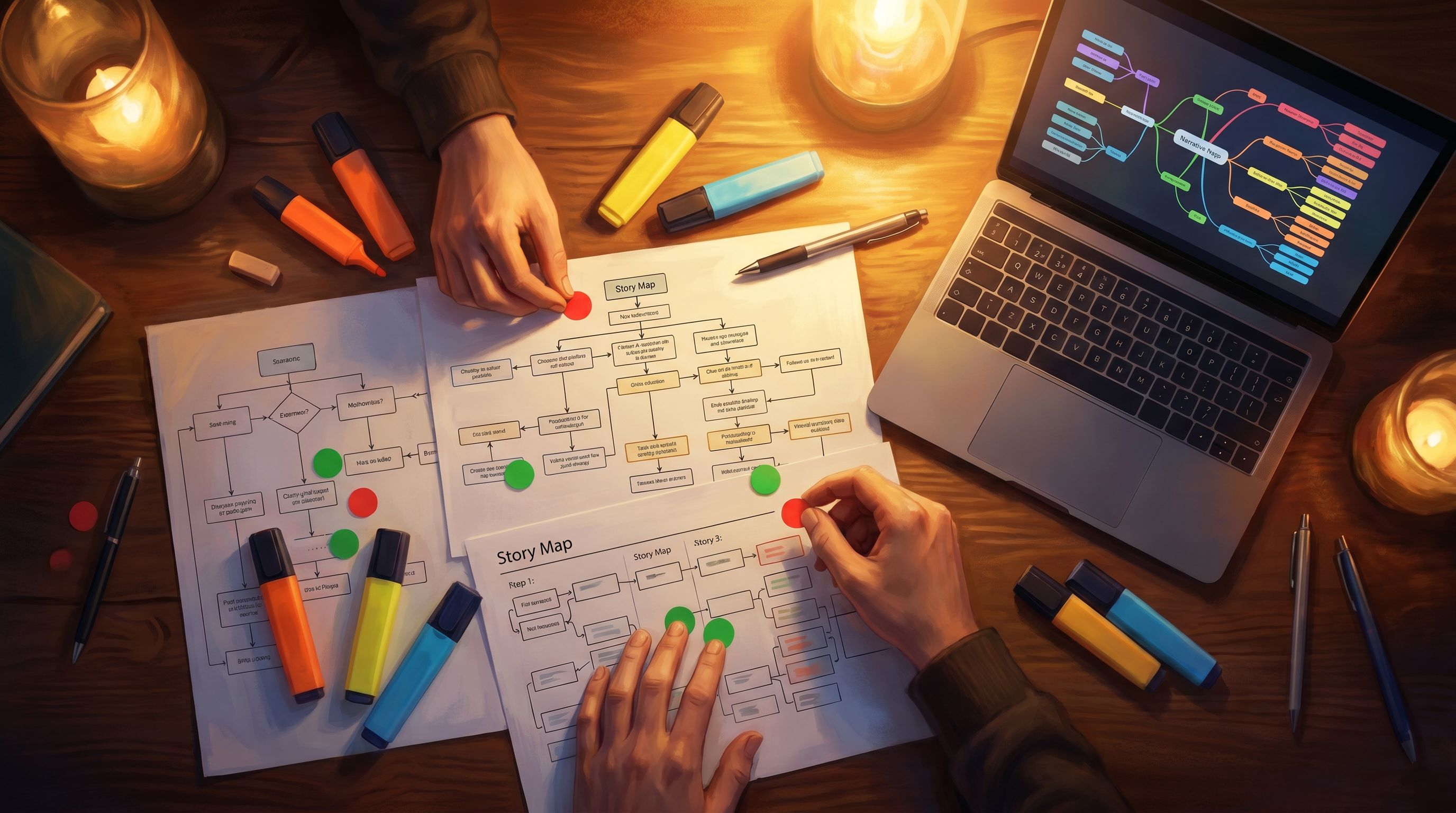

Step 1: Tag your scenes

For each major scene or choice node, give it one or more tags based on your observations:

- HIT – strong emotion, high replay intent, clear stakes.

- MUDDY – repeated confusion, unclear outcomes.

- FLAT – low reaction, low curiosity, but no confusion.

- FRICTION – good tension, but maybe too punishing or unclear.

You can do this right inside your Questas map by color‑coding or annotating nodes.

Step 2: Decide your focus for the next build

You don’t need to fix everything at once. Choose one of these lenses for your next revision:

- Clarity pass – Clean up MUDDY scenes: sharper copy, clearer visual cues, more explicit stakes.

- Tension pass – Boost FLAT scenes: introduce time pressure, social consequences, or more interesting trade‑offs.

- Pacing pass – Address FRICTION scenes that feel like walls: add soft gates, hints, or alternate routes (for more on this style of design, see Adaptive Difficulty in Interactive Stories: Using Soft Gates, Hints, and Optional Paths in Your Questas).

Step 3: Make one structural bet

Every iteration, make one bigger structural change based on what you’ve learned:

- Merge two weak branches into a single, stronger path.

- Move a powerful twist earlier, where more players will see it.

- Split an overloaded scene into two smaller beats with a micro‑choice between them.

This keeps your builds evolving meaningfully instead of just getting more polished around the edges.

When to start caring about “real” analytics

At some point, you’ll move from “is this story working at all?” to “how does this perform with a larger audience?”

That’s when it makes sense to layer in more traditional analytics:

- Branch popularity at scale – Which choices are most common across hundreds of players?

- Drop‑off by entry source – Do people who come from a live workshop behave differently than those who find your Questas via a link on your site?

- Session length and return visits – Are people coming back days later to explore new paths?

A good rule of thumb:

- Under 30 players: Rely mostly on qualitative notes + the three core metrics (path completion, choice friction, replay intent).

- 30–100 players: Start tracking simple aggregates (most/least completed branches, average scenes per session).

- 100+ players: Layer in more detailed funnels and segmentation.

Even then, remember: your goal isn’t just higher numbers. It’s stronger stories that do their job—whether that job is teaching a safety protocol, selling a product narrative, or letting someone explore a fictional world.

Common pitfalls to avoid in early Questas measurement

A few traps creators fall into again and again:

-

Treating early testers like “users,” not collaborators.

In early builds, your playtesters are co‑designers. Invite them to talk out loud, suggest alternate endings, and question your assumptions. -

Chasing “perfect” visuals too soon.

AI‑generated images and videos are a core strength of Questas, but you don’t need final art to test story beats. Rough, consistent visuals are enough to validate structure and pacing. -

Ignoring edge‑case branches.

If multiple testers fight to do the “weird” thing—betray the ally, run away, refuse the mission—that’s not noise. That’s a sign you’ve touched a real desire for agency. -

Redesigning everything after each test.

Make small, surgical changes between small batches of testers. Save bigger overhauls for when you see the same issues across multiple sessions.

Bringing it all together

Early measurement in Questas isn’t about building a perfect analytics stack. It’s about:

- Choosing one clear purpose for each build.

- Focusing on three core signals: path completion, choice friction, replay intent.

- Capturing rich, structured notes from playtest conversations.

- Ignoring metrics that don’t help you answer your current questions.

- Turning patterns into tagged scenes and deliberate iteration passes.

Do this consistently over a few cycles, and you’ll feel a shift: your builds stop being guesswork and start feeling like experiments. Each new version exists to answer a specific narrative question—and your analytics, light as they are, tell you whether it did.

Ready to run your next experiment?

You don’t need a massive audience or a complex dashboard to start learning from your stories. You just need:

- A small, focused Questas build.

- 5–10 willing playtesters.

- A notebook (or doc) ready to catch what happens.

Open your next project and ask yourself:

“What is this build trying to learn?”

Then design your scenes, choices, and questions around that. Treat every playthrough as data. Treat every note as a clue.

Your stories will get sharper. Your visuals will land harder. And by the time you’re ready for full‑scale analytics, you’ll already know exactly what you’re looking for.

Adventure awaits—go run that next test.